The opinions expressed in this article are my own and do not necessarily reflect those of my clients or employer. I use Anthoropic’s Claude to do most of my writing.

In an earlier post, my argument was that AI agents are much better at helping us do the thing than doing the thing themselves, and that before they can do much of anything useful, they need somewhere to live. An operating system. Trust, memory, orchestration, all the boring plumbing that makes autonomy actually work.

That was about moving agents from task execution to something closer to judgment. Navigating real workflows instead of running isolated demos. The reactions were interesting, but not in the way expected. Nobody really wanted to talk about orchestration. What people kept asking, in various polite and less polite ways, was: OK, but can these systems (help us) think better? Not think faster. Not automate the thinking we’re already doing. Actually think in ways we currently don’t.

Which, it turns out, is a much harder question. And the answer probably has less to do with AI than with something embarrassing about how human reasoning actually works.

The decision that won’t get made

Here’s a situation. You’re on a leadership team. The question on the table is whether to embed generative AI into your core product. The first-mover faction says speed wins. The fast-follower faction points out (correctly, with examples) that plenty of companies moved early and spent two years building on the wrong architecture. The wait-and-see faction wants more data. Everyone has a slide deck.

Months pass. The decision doesn’t get made. Or (and this is the more common and worse outcome) it gets made for bad reasons. Someone was more persuasive in the last meeting. A board member mentioned what a competitor was doing. People got tired.

The standard explanation is “analysis paralysis,” which sounds like a diagnosis but is really just a description. The team had too much information and too many options and couldn’t decide. Sure. But why couldn’t they decide?

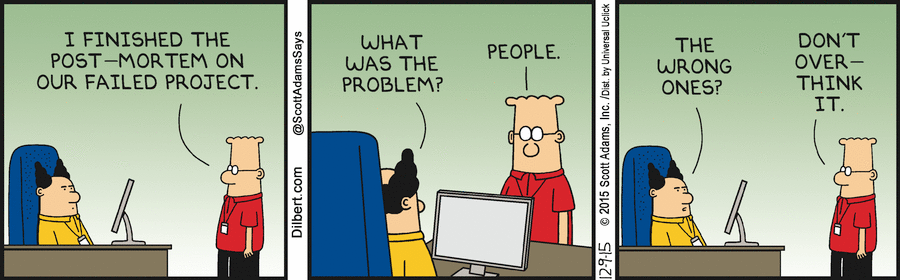

Here’s a theory: the team wasn’t stuck because they had too many options. They were stuck because everyone was reasoning about the problem the same way, and nobody could see it. It’s hard to notice that you’re trapped in a single thinking framework while you’re using it, in the same way it’s hard to notice you have an accent. You just sound normal to yourself.

Analysis paralysis, in a lot of cases, is a reasoning-mode problem. You’re locked into one way of thinking when the situation is screaming for three or four.

If that’s right, it matters a lot for where Agent OS goes next.

Five thinking approaches, one brain

There are, broadly speaking, five reasoning frameworks that show up in most serious decision-making. Most of us are fluent in one, maybe two. Over a longer course of time, we use most of them. We reach for them the way you reach for a familiar coffee mug: not because it’s the best vessel for the job, but because it’s there and it’s yours.

First Principles Thinking strips a problem to raw materials and rebuilds from scratch. If you listen to any tech podcast, this is the most popular buzzword bingo entry. The famous example is Elon Musk looking at the cost of a rocket, deciding the market price of components was basically a fiction, and asking what the underlying metals and carbon fiber actually cost per pound. That’s how you get SpaceX.

The less famous application is something like a telco facing margin pressure on its wireless network. The standard move is to optimize: renegotiate spectrum leases, densify towers, squeeze more efficiency out of existing infrastructure. First principles asks a ruder question. Why does the infrastructure need to be terrestrial at all? Low-earth orbit satellites change the economics entirely. You don’t get there by optimizing towers. You get there by asking whether towers are the right unit of analysis, which nobody in the room was doing because everyone in the room grew up building towers.

Analogical Thinking is pattern-matching across domains. I do this all the time. My teams are probably tired of me saying “we are chefs, tools are ingredients in a pantry…”

A streaming platform looking at subscriber churn might study mobile gaming engagement loops. The content has nothing in common, but the churn dynamics are structurally the same.

Where this gets fun is when the analogy is weird. A telco trying to reduce call center volume would naturally look at what other telcos do. But Netflix basically solved a version of this problem by replacing reactive customer support with predictive personalization that fixed the friction before anyone picked up a phone. This “literally” (I know I’m using the word… and I mean it) happened to me back in 2015. I call it “the best interaction I never had“. And build a proactive service offering out of that experience. Nobody in telecom was looking at a content company for call center strategy, which is exactly why it’s a useful place to look.

Systems Thinking maps how things connect to each other. The natural lens for a tech company evaluating cloud resilience or a telco doing network capacity planning.

The non-obvious application: a media company negotiating content licensing deals. This looks like procurement. But each licensing decision quietly reshapes what the recommendation algorithm serves up, which changes viewer behavior, which alters what content gets greenlit next, which changes negotiating leverage for the next round of deals. It’s a feedback loop masquerading as a purchase order. Many media companies negotiate these like they’re buying office supplies.

Design Thinking starts with “how does this feel to a human being.” When a telco sunsets 3G or migrates customers to a new billing platform, the playbook is all systems engineering: cutover timelines, device compatibility matrices, capacity planning. Design thinking asks one question nobody else in the room is asking: what happens to the 80-year-old customer whose flip phone just stopped working? Suddenly the migration isn’t a technical project. It’s a churn problem, a brand problem, and (depending on how many 80-year-olds you have) potentially a regulatory problem. No Gantt chart is going to catch that.

Critical Thinking stress-tests everything else. A media company debating a big bet on AI-generated programming might have pitch decks that look fantastic and competitive pressure that feels urgent. Critical thinking is the person in the room (usually not a popular person in that moment) who asks: what has to be true for this to work at scale, and what evidence do we actually have beyond our own pilots? That question doesn’t kill the momentum. It redirects it toward the bets that might survive contact with actual audiences.

Where it breaks down

So here’s the problem. When you’re deep in a first-principles decomposition, you don’t naturally pause and think, “Actually, is there just a good analogy for this that would take five minutes instead of five weeks?” When you’re mapping a system’s interdependencies, you can lose hours modeling feedback loops and completely miss that the underlying assumption needs to be questioned, not modeled.

The framework you know best feels right. It always feels right. That’s what makes it your framework. And the idea of simultaneously running five different reasoning approaches, holding their outputs in your head, noticing where they agree and disagree, and then synthesizing all of that into a decision. Look, there are probably people who can do this. They are not most people. They are not most teams. It’s not a skills problem; it’s a bandwidth problem.

The issue isn’t picking the right framework. The issue is that the situation needs several frameworks running at once, and we don’t have the cognitive hardware to do that.

The algorithm for thinking about work, applied to thinking itself

There’s a useful parallel here. Musk has what he calls “the algorithm”: a five-step process he reportedly repeats to an annoying degree in production meetings at Tesla and SpaceX. The steps: question every requirement. Delete any part or process you can. Simplify and optimize what’s left. Accelerate the cycle time. And only then, automate.

The order is the point. Most organizations jump straight to step five. They try to automate a process that nobody has bothered to question, simplify, or delete. Musk’s own admission: “The big mistake in Nevada and at Fremont was that I began by trying to automate every step. We should have waited until all the requirements had been questioned, parts and processes deleted, and the bugs were shaken out.”

Now apply that same sequence not to a production line, but to how organizations reason through decisions:

Question every requirement. Which thinking frameworks are actually being used? Who decided this is a systems problem, and why? Is the team defaulting to financial modeling because the CFO is in the room, or because financial modeling is actually the right lens?

Delete. Which lines of reasoning are producing noise rather than signal? If the analogical pass keeps generating comparisons to companies in unrelated industries with different unit economics, maybe that pass needs to be dropped for this particular problem.

Simplify. Of the frameworks that are producing useful output, where are they agreeing? Agreement across frameworks is a strong signal. That’s where the team should focus instead of trying to reconcile every divergence.

Accelerate. How quickly can the team cycle through multiple reasoning modes on the same question? Right now, this takes weeks of meetings. What if it took hours?

Automate. And only now, after the reasoning process itself has been questioned, trimmed, and simplified, does it make sense to ask whether agents can take on parts of it.

This is where the interesting question lands: can agents actually do these things?

Not in the abstract. Concretely. Can an agent question whether a team is stuck in a single reasoning mode? Can it delete an unproductive line of analysis? Can it simplify by identifying where three different reasoning approaches are converging on the same conclusion? Can it accelerate the cycle from weeks to hours by running multiple reasoning passes in parallel?

The answer, today, is a qualified yes, if the system is designed correctly. Current LLMs can be constrained to reason within specific frameworks. They can be prompted to decompose a problem to first principles, or to search for analogies, or to map system dynamics. No single pass is as good as a world-class expert in that mode. But the ability to run five of them simultaneously, and then compare outputs? That’s something no human team does well, because of the cognitive limits described above. It’s a capability the technology has that we don’t.

The harder problem (and this is where the real work is) is the layer that sits above those passes. The thing that evaluates whether a given line of reasoning is actually useful or just sounds rigorous. The thing that decides when to switch. The thing that synthesizes. That’s not a prompt. That’s an architecture.

Thinking about thinking

There’s a term for that architecture: meta-reasoning. It just means deciding how to think instead of just thinking. Which sounds like a philosophy seminar, but it’s actually pretty practical.

You’ve done it. Everyone has. You’re an hour into analyzing something and you stop and think, “Wait, this is circular. What if instead of decomposing the problem, the better move is just to find someone who solved something similar?” That pause, the moment where you step outside the reasoning and evaluate the reasoning, that’s meta-reasoning.

The problem is that we do it inconsistently, usually by accident, and almost never when the stakes are high. Under pressure, in a room full of people, with a deadline, the last thing anyone does is step back and say, “Hey, maybe we’re all thinking about this wrong.” It’s the cognitive equivalent of asking for directions. Everyone knows they should, and almost nobody does.

Now, someone is going to read this and say: “That’s just a good facilitator. A skilled chief of staff or strategy lead does exactly this, reads the room, notices when the team is stuck in one mode, and redirects.” And that’s fair. Some people are genuinely excellent at this. The problem is that it’s rare, it’s expensive, and it doesn’t scale. That one brilliant facilitator can be in one room at a time. They have their own biases about which frameworks to reach for. They get tired. They have political constraints on what they can say. And no organization has enough of them to cover every decision that matters.

The question is whether systems could do this more consistently. Not replacing that facilitator, but being available in the rooms where there isn’t one.

What This Actually Looks Like

Let’s make it concrete. Go back to the AI-in-product decision from the opening. The leadership team is stuck. Here’s what a meta-reasoning layer does with that problem.

A first-principles pass decomposes it: what does it actually cost to build this capability, what’s the minimum viable version, and what assumptions about build-vs-buy are being treated as given that shouldn’t be? An analogical pass looks for structural parallels: which companies in adjacent industries embedded AI into their core product early, and what patterns emerge from the ones that succeeded versus failed? A systems pass maps second-order effects: how does this decision interact with the product roadmap, the engineering hiring plan, and the partnerships in flight? A design pass asks what the customer actually experiences: does this make the product better in a way they’d notice and pay for? And a critical pass stress-tests the lot: what has to be true for any of this to work, and where is the evidence thinnest?

The interesting part isn’t any individual pass. It’s the controller that reads the outputs, notices where they contradict each other (and those contradictions are often the most valuable signal), and synthesizes them into something the team can actually use.

That’s the meta-reasoning controller. Less a brain, more an orchestra conductor who doesn’t play an instrument but knows when the strings section has been going on too long.

The honest caveat: the hard problem isn’t generating the individual passes. It’s evaluating whether the output is actually good. A first-principles decomposition that sounds rigorous but rests on a flawed assumption is worse than no decomposition at all. The controller has to catch that, and right now, that’s still more art than science. But “more art than science” is also how most strategic decision-making works today. The bar isn’t perfection. It’s whether the system produces better inputs than the team would generate on its own.

The Evolution of Agent OS

This is where Agent OS comes back in. The arc of these systems has a direction: Execution → Judgment → Decisioning. First-generation agents did tasks. Second-generation agents are learning to navigate judgment calls inside workflows. Third-generation agents will help orchestrate how people reason through hard problems in the first place.

That third generation requires an architecture that looks something like this: framework-specific agents (or more accurately, framework-constrained reasoning passes) running in parallel, a meta-reasoning controller that monitors, evaluates, and synthesizes their outputs, and a reinforcement loop that learns over time which combinations of reasoning work for which kinds of problems. Not because someone wrote the rules, but because the patterns emerged from use.

Agent OS needs to evolve from orchestrating work to orchestrating thinking. And unlike the facilitator who can be in one room at a time, this layer can sit behind every consequential decision in the organization. Consistently, without fatigue, and without the political constraints that keep smart people from saying “I think we’re approaching this wrong.”

The Quiet Part

If this happens (and most of the underlying components already exist, so “if” is doing less work than it might seem), it probably won’t be dramatic. Meta-reasoning won’t show up as a product launch. It’ll show up as a feature that offers a different perspective when a team has been stuck on the same slide for forty minutes. Or a nudge: “This has been treated as a systems problem for the last three meetings. What would a first-principles view look like?”

Over time, those nudges accumulate. The organizations that build this into their intelligence layer will make differently shaped decisions. Not just faster, but drawing on a wider range of reasoning than any team could sustain on its own.

The spreadsheet didn’t replace the analyst. The autopilot didn’t replace the pilot. Meta-reasoning won’t replace anyone’s judgment. It’ll just make visible the cognitive ruts we can’t see in ourselves, and open up lines of reasoning we weren’t going to find on our own.

The future of AI probably isn’t better answers. It’s better ways of arriving at answers. Which starts, somewhat awkwardly, with building systems that think about thinking.

Leave a comment